2017-09-06 »

Last night I spent a few hours converting old-style (like a year ago :)) javascript "promises" into new-style async/await syntax. Whoa. I hereby declare that async/await is one of the approximately two good things to happen to programming languages in the last 10 years (the other one being Go's static duck typing). It's that good.

2017-09-13 »

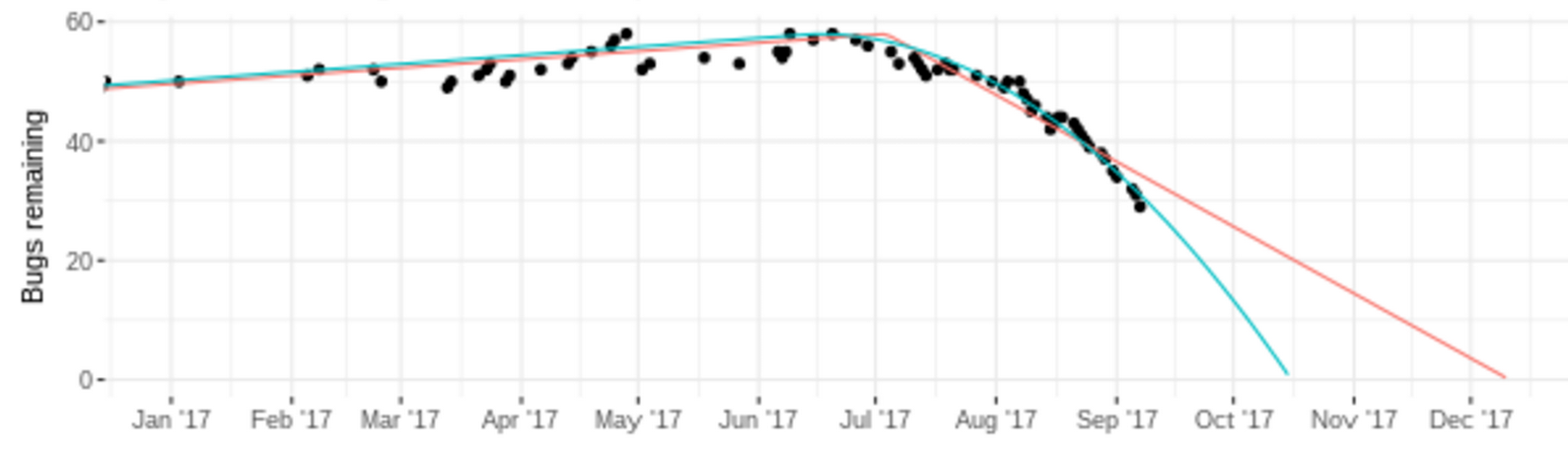

Which curve fit is best (if any)?

It's remarkable how once a big team starts working on a big hotlist of bugs (July '17 in this case), the progress rate is pretty consistent, even though the variance of time it takes to finish any one bug is surprisingly high. That's why in aggregate, it makes sense to try to estimate release milestones even though it's almost totally pointless to try to estimate the completion time of one or two bugs.

The red line assumes a linear rate of progress. The blue line is a much closer fit to the existing data, but assumes a quadratically increasing rate of progress.

2017-09-14 »

I watched a YouTube video of a demo of this game. It looked magnificently boring and essentially unplayable. And very pretty. But doomed. Still, I kinda hope they pull it off. I wish more crazy ideas would get pulled off.

http://www.kotaku.co.uk/2016/09/23/inside-the-troubled-development-of-star-citizen

2017-09-19 »

Nice! Gmail now auto-asks the questions you were afraid to ask, such as "What is this?" when the meeting invite has no detailed description :)

2017-09-20 »

Great news, everyone! Someone completely eliminated the need for network queues, so we can stop trying to figure out active queue management.

2017-09-21 »

Playing with some super slow internet today (~256kbits/sec). One thing that it's making clear is how abysmal we are at caching the parts of web pages that never change. Ironically, modern web apps should be better than old style static HTML in this way: we can download only the content, not the fancy page structure, and cache the rest. But that's definitely not what happens. (Once a web app finally loads, navigating around in it was fairly tolerable.)

Mobile apps feel vastly quicker, mainly because they're already downloaded. But... so are web apps that I use all the time! If we care about the web ecosystem, maybe just the caching part could use more work.

2017-09-25 »

Two name-pronunciation anecdotes:

1) I lived in Montreal for quite a while, where there are lots of francophones. Most people there pronounce my name "wrong," something like "Avérie" (Ah-vey-ree). Of course, I can't actually replicate it. But it's a pretty good way to pronounce my name, honestly, and I kinda like it.

2) "Linux" is famous for having a non-obvious pronunciation. There is a vintage audio file[1] where Linus Torvalds says, "Hello, this is Linus Torvalds, and I pronounce Linux as Linux." The way he phrased this sentence is very particular, and I think a lot of people don't understand it. He made the recording after a mailing list thread in which people demanded to know the "right" way. His stated opinion was basically, you should pronounce it how you pronounce Linus. Linus might be a name of someone you know. You almost certainly don't pronounce Linus the way Torvalds does, but that doesn't mean you're wrong. Anyway, however you pronounce it, Linux is pronounced the same way, but with an x. The discussion went on until he gave in and made the recording, but specifically made sure to say his name, and to emphasize "and I pronounce Linux as Linux."

I always liked that story. I have also always been amused by the people who feel the need to correct your pronunciation of "Linux" if you say it the obvious way; they even point at the audio recording as proof. But the audio recording doesn't mean what they think it does.

2017-09-27 »

The pro/con lists and "how to succeed" sections here look eerily accurate to me.

https://blog.ycombinator.com/three-paths-in-the-tech-industry-founder-executive-or-employee/

2017-09-28 »

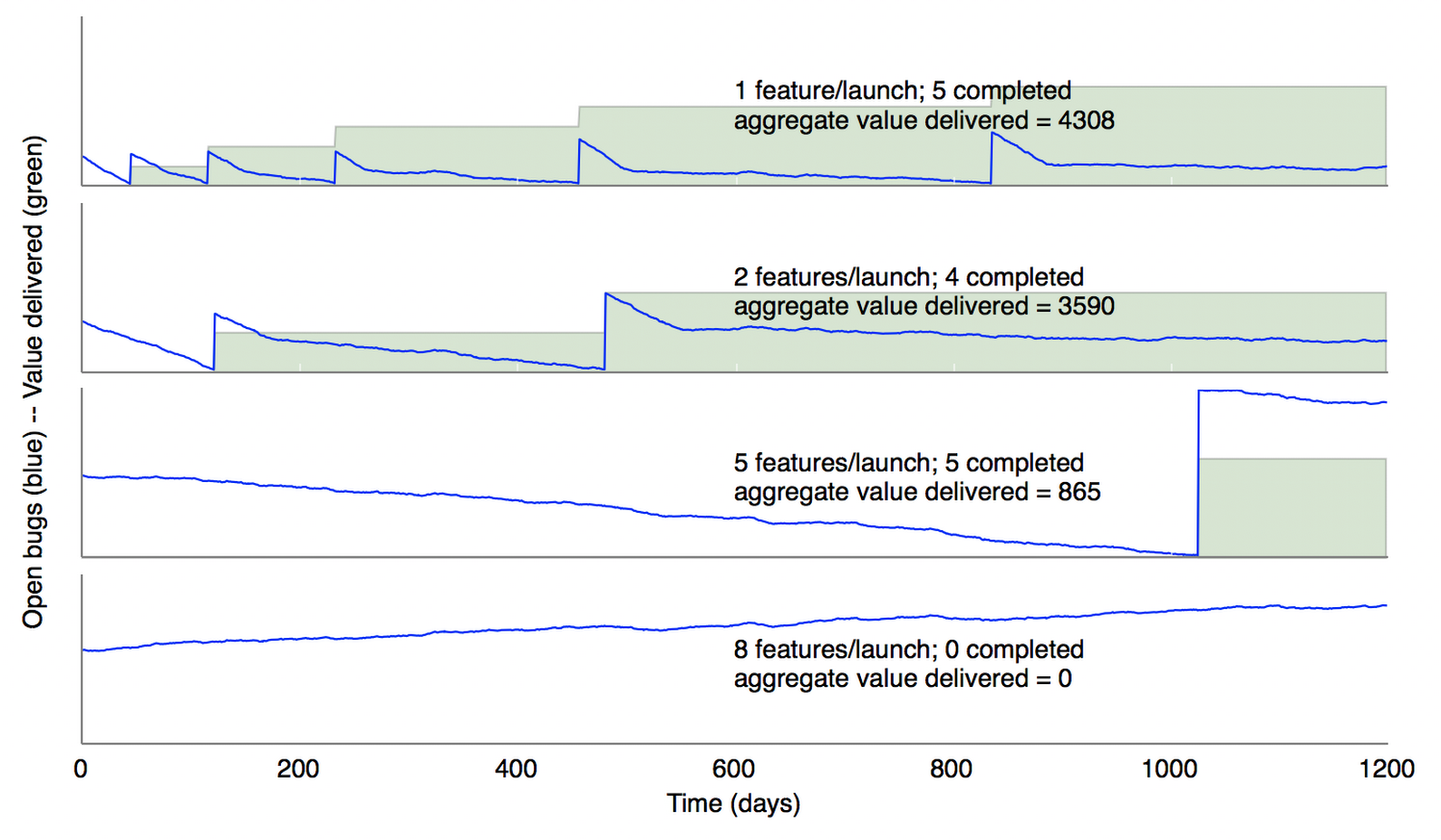

SimSWE part 2: The perils of multitasking

I updated my SWE simulator[1] from a few weeks ago. This time, instead of PMs changing their minds about features, we have PMs and execs who choose not to make up their minds at all, letting launch requirements get bloated.

These four charts have the same eng team, same set of features, same pattern of bugs getting filed, same work rate, etc. The only difference is how many "features" (one "bug" can be tagged as required for one or more features) are required for a particular milestone. In this version of the simulator, when we hit a given milestone (reach ~0 bugs in the related features), we launch the product with those features intact. From then on, we have to maintain the product (bugs in already-launched features take precedence) as well as trying to develop new features, all with the same team. Thus, each subsequent feature takes longer to launch, and eventually new features can't launch at all without expanding the team size. (This is why products need bigger teams as they launch more features. In turn, that's why they need more and more revenue.)

When a feature launches, we consider that to be "value" provided to customers. It's a rough proxy for revenue, or increased customer retention, or whatever. Providing value takes time: if I buy a product, I don't get value for it instantaneously. I expect it to keep delivering value as time passes. So the height of the green line is the instantaneous value being delivered (in this simulation, it's simply proportional to number of features shipped). The green area under the curve (the integral of instantaneous product value over time) is the total value that has been delivered so far.

Using this (admittedly oversimplified) model, for this hypothetical product, shipping two features at a time would deliver 4x as much value, after 1200 days, as shipping 5 features at a time. With the same engineering team and the same features! In short, that's what makes SpaceX efficient and others inefficient. SpaceX might work engineers a little harder, but that's not the real benefit. The real benefit is that SpaceX has a clear idea of what really, really needs to happen next, and they deliver it incrementally. Others, mostly, don't.

(You might wonder what happens if we extend the plot to, say, 2000 days. Eventually, the team launches all the features it's capable of maintaining, and just keeps churning away at bugs. The value ratio between chart#2 and chart#3 keeps decreasing, but the absolute difference never goes away. Also note that value delivery usually drops off at a fixed point in the future, when your feature isn't needed anymore (eg. someone else builds a better one). So you can't just extend the time horizon forever to try to make it look better.)

Not shown: the benefits of being able to change your direction, based on customer feedback, after launch #1. The assumption that we are building the same features, but in a different order, is not very realistic. In reality, what you learn after launch #1 is so valuable that you end up changing product direction quite a lot, so early time spent working on features for future milestones is largely wasted.

(The team that completely fails to focus, chart #4, never launches anything because there are so many moving parts that new bugs are found faster than you can fix them. The usual response is to just launch something crappy and plan to fix it in v2. Notice how different that is from the first three charts, where we make no quality sacrifices at all, for a limited subset of functionality, and still launch much earlier. And then we "unrealistically" commit to fixing the bugs in already-launched features before launching new features. You could say that's the difference between many Apple products and other products.)

[1] SWE simulator v1

Why would you follow me on twitter? Use RSS.