2013-05-03 »

One of the scariest yet most informative periods of my life was a brief stint doing some digital control systems for mechanical devices. In university we learned about this stuff as Control Theory, PID controllers, etc.

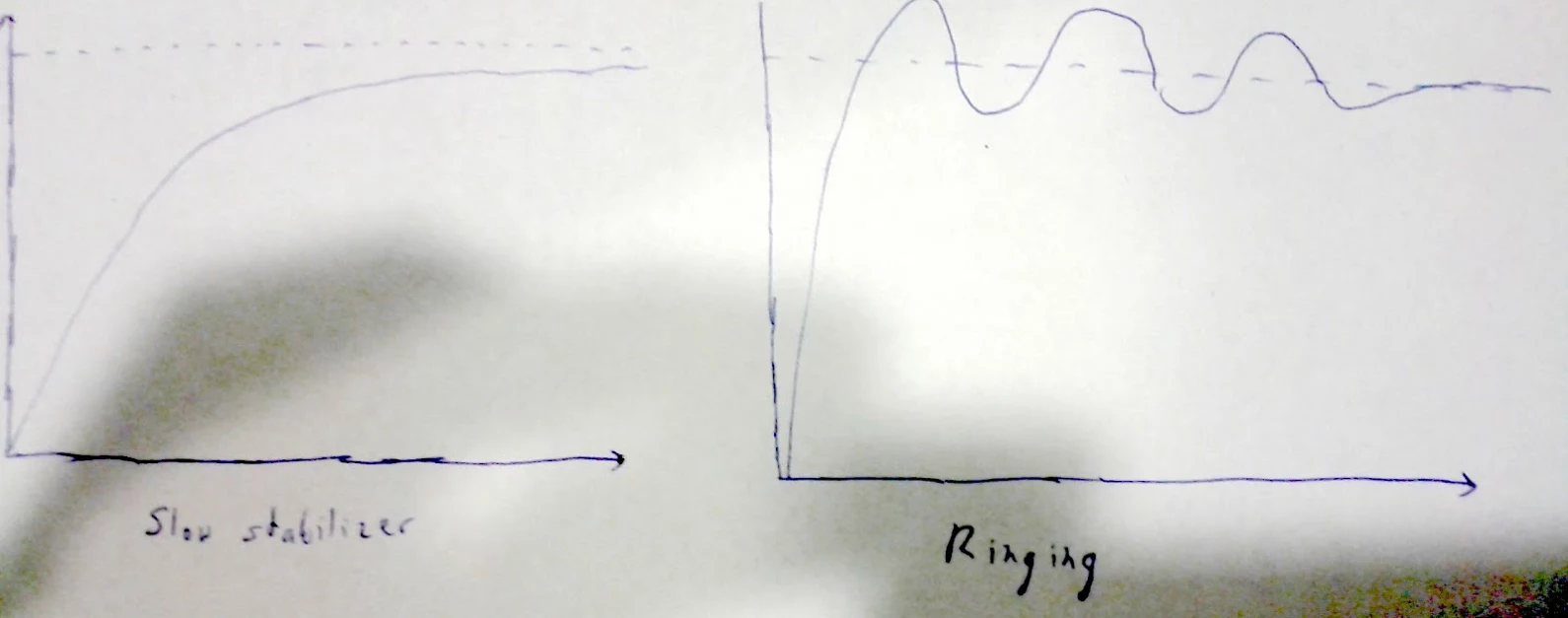

Approximately the first thing you learn about control theory is the concept of "overshoot." Say you have a robot, and it's too far to the left, so you power up the motor full blast to try to move it to the right. Then, once it gets there, you turn off the motor. That's too late; you'll probably end up flying past where you wanted to be, so you have to go back the other way, probably fly past again, etc. That's the diagram on the right.

If instead, you run the motor a little slower - or, as they soon taught us, run it "proportionally" to the distance from the target (that's the "P" in "PID controller"), you can adjust it to avoid overshoot, getting a result more like the one on the left. In most cases, the diagram on the left is better because it's less crazy, even though you don't get to the destination right away.

Now let's relate that back to software engineering. Let's say you've got a release pipeline: dev->staging->dogfood->canary->prod. For simplicity, let's assume you're doing it like Chrome, and each phase is running a slightly older version of the software than the previous, because versions progress through the phases linearly (probably getting patched along the way).

Now let's say that this week, you've got a set of new cool stuff in dev that you want to get to customers (prod) as soon as possible.

The default option is to keep running the pipeline as is. That gives you something like the diagram on the left: you don't get to the goal line (the dotted line in the picture) very fast, but along the way everything is sane and predictable and once you finally get there, you're there.

An alternative is to make an exception and disrupt your pipeline. Take the version from dev, and ram it through the pipeline as fast as you can, fixing bugs as fast as you can along the way. This will get you to the goal - released features - much faster. But it will probably look a bit like the diagram on the right; you won't exactly hit your goal, and your release process might start making weird patterns. Maybe you have to revert to a previous one. Once you've reverted, maybe you'll roll forward again, but you're even more desperate this time, so maybe you overshoot one more time. And so on.

Once you're into this overshoot/undershoot pattern, it's actually hard to get back out. And moreover, if you've overshot really badly, then now you're probably really desperate, and you might overshoot a second time. Sometimes it can take longer to finally settle on the goal with this overshoot pattern than in a slow-stabilization pattern. By then, you might have a new input (ie. new crazy-important features) before you even finish bouncing from the last one. (In the diagram they both stabilize after about the same delay, but either graph could actually be longer or shorter than the other depending on the amount of over/under compensation you use.)

For a software release manager, the P knob in the PID controller is the ratio between number of changes and length of time in your release cycle, aka the "time pressure" on your release.

Another thing I learned in my control systems classes was: there are two ways to build a control system. Many traditional PID controllers are "designed" by calculating some basic ratios to start with, and then twiddling knobs until the results come out pretty decently, then locking in those settings. That's physical-world engineering for you. It sounds silly, but it works.

The other way is to do some really complicated math and come up with the theoretically perfect compensator (which is probably not a simple PID controller). That's either fantasy or literal rocket science, depending whether you're good at it or not. Most of us are not. :)

You fail the class if you just turn the P knob all the way up to max because you're in a hurry to get there as fast as possible.

Why would you follow me on twitter? Use RSS.