2016-04-17 »

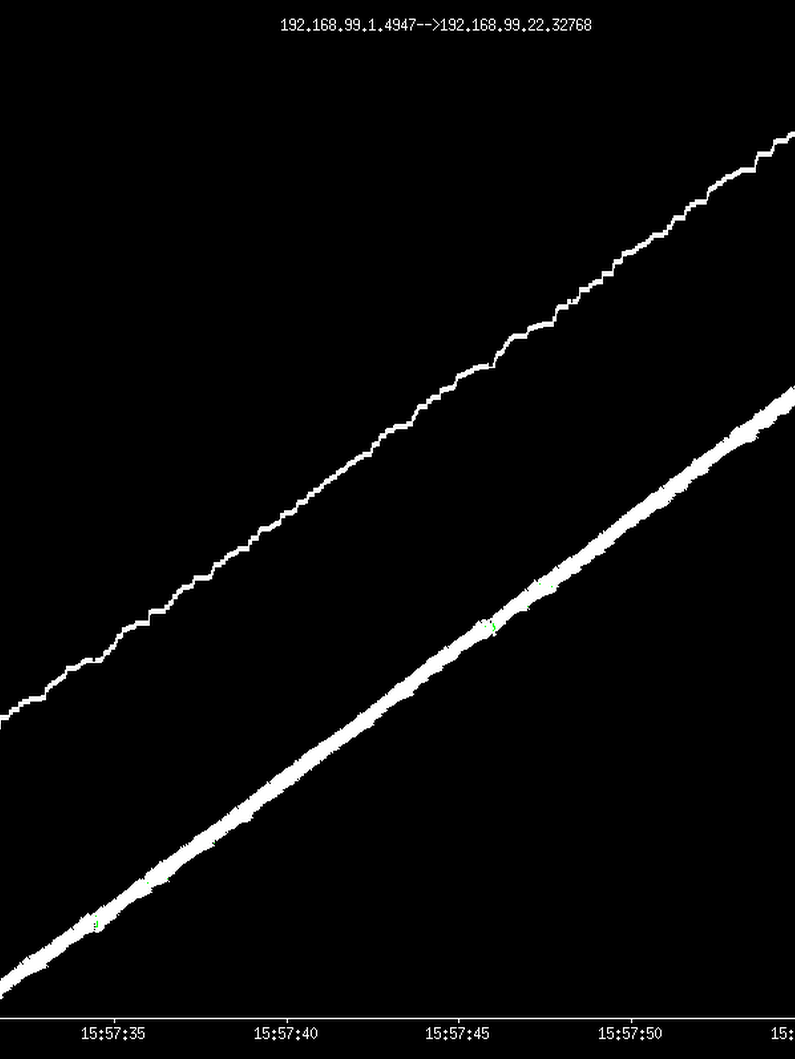

40% packet loss and my toy TCP "semisonic", a port of someone's hack to our slightly older TCP stack, with some additional lobotomization to make the RTO timer back off less. Unlike my previous post about TCP THERMAL, this one doesn't fill up the entire receive window, which means it doesn't overfill queues and cause unnecessary increase in latency. Interestingly, by keeping latencies lower, we can retry lost packets sooner, getting similar throughput with less socket buffer memory required on each end.

On the other hand, Linux's (and maybe everybody's) RTO backoff is ridiculously way too aggressive to work well in high-loss environments. Without my changes, the traces had multi-second periods with no data being sent at all, which is obviously not great for live video. People have spent a lot of time tuning congestion control in the "normal" TCP flow case, but seemingly not in the backoff case. I wonder if BBR fixes that code path too.

Why would you follow me on twitter? Use RSS.